Archive

-

Firefox 52 setTimeout() Changes

Firefox 52 hit the release channel last week and it includes a few changes to

setTimeout()andsetInterval(). In particular, we have changed how we schedule and execute timer callbacks in order to reduce the possibility of jank. -

Patching Resources in Service Workers

Consider a web site that uses a service worker to provide offline access. It consists of numerous resources stored in a versioned Cache.

What does this site need to do when its code changes?

-

Service Worker Meeting Highlights

Last week folks from Apple, Google, Microsoft, Mozilla, and Samsung met in San Francisco to talk about service workers. The focus was on addressing problems with the current spec and to discuss what new features should be added next.

-

Intent to Implement Streams in Firefox

In the next few months I intend to begin implementing the Streams API in the Gecko codebase.

-

Evaluating the Streams API's Asynchronous Read Function

For a while now Google’s Domenic Denicola, Takeshi Yoshino, and others have been working on a new specification for streaming data in the browser. The Streams API is important because it allows large resources to be processed in a memory efficient way. Currently, browser APIs like XMLHttpRequest do not support this kind of processing.

Mozilla is very interested in implementing some kind of streaming data interface. To that end we’ve been evaluating the proposed Streams spec to determine if its the right way forward for Firefox.

In particular, we had a concern about how the proposed

read()function was defined to always be asynchronous and return a promise. Given how often data isread()from a stream, it seemed like this might introduce excessive overhead that could not be easily optimized away. To try to address that concern we wrote some rough benchmarks to see how the spec might perform.TL;DR: The benchmarks suggest that the Streams API’s asynchronous

read()will not cause performance problems in practice. -

Service Workers Will Not Ship in Firefox 41

In my last post I tried to estimate when Service Workers would ship in Firefox. I was pretty sure it would not make it in 40, but thought 41 was a possibility. It’s a few months later and things are looking clearer.

Unfortunately, Service Workers will not ship in Firefox 41.

-

Service Workers in Firefox Nightly

I’m pleased to announce that we now recommend normal Nightly builds for testing our implementation of Service Workers. We will not be posting any more custom builds here.

-

Initial Cache API Lands in Nightly

Its been two busy weeks since the last Service Worker build and a lot has happened. The first version of the Cache API has landed in Nightly along with many other improvements and fixes.

-

That Event Is So Fetch

The Service Workers builds have been updated as of yesterday, February 22:

-

A Very Special Valentines Day Build

Last week we introduced some custom Firefox builds that include our work-in-progress on Service Workers. The goal of these builds is to enable wider testing of our implementation as it continues to progress.

These builds have been updated today, February 14:

-

Introducing Firefox Service Worker Builds

About two months ago I wrote that the Service Worker Cache code was entering code review. Our thought at the time was to quickly transition all of the work that had been done on the maple project branch back into Nightly. The project branch wasn’t really needed any more and the code could more easily be tested by the community on Nightly.

Fast forward to today and, unfortunately, we are still working to make this transition. Much of the code from maple is still in review. Meanwhile, the project branch has languished and is not particularly useful any more. Obviously, this is a bad situation as it has made testing Service Workers with Firefox nearly impossible.

To address this we are going to begin posting periodic builds of Nightly with the relevant Service Worker code included. These builds can be found here:

-

Implementing the Service Worker Cache API in Gecko

For the last few months I’ve been heads down, implementing the Service Worker Cache API in gecko. All the work to this point has been done on a project branch, but the code is finally reaching a point where it can land in mozilla-central. Before this can happen, of course, it needs to be peer reviewed. Unfortunately this patch is going to be large and complex. To ease the pain for the reviewer I thought it would be helpful to provide a high-level description of how things are put together.

-

Composable Object Streams

In my last post I introduced the pcap-socket module to help test against real, captured network data. I was rather happy with how that module turned out, so I decided to mock out dgram next in order to support testing UDP packets as well.

-

Writing Node.js Unit Tests With Recorded Network Data

Automated unit tests are a wonderful thing. They help give you the confidence to make difficult changes and are your first line of defense against regressions. Unfortunately, however, unit tests typically only validate code against the same expectations and pre-conceived notions we used to write the code in the first place. All to often we later find our expectations do not match reality.

One way to minimize this problem is to write your tests using real world data. In the case of network code, this can be done by recording traffic to a pcap file and then playing it back as the input to your test.

In this post I will discuss how to do this in node.js using the pcap-socket module.

-

Making Progress on Personal Projects

Sometimes I wonder how many people consider personal projects, but never even get started because they feel they don’t have the time? How many amazing and interesting ideas lie unrealized due to day-to-day commitments?

-

Naming the Project: FileShift

-

Working with NetBIOS in node.js

Lately I’ve been caught in the middle of a dispute between my Xerox scanner and Mac OS X. The Mac only wants to use modern versions of SMB to share files and is giving the scanner the cold shoulder. In an attempt to mediate this issue I’ve turned to hacking on ancient protocols in node.js.

So far I’ve tackled NetBIOS and thought I would share some of the code I’ve come up with.

-

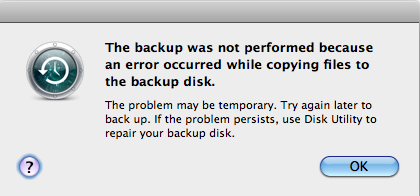

Time Machine and npm

Do you develop npm modules on Mac OS X? Do you also use Time Machine to backup your work?

If you answered yes to both those questions, then there is a good chance you will be looking at this dialogue box at some point.

-

Xerox + Apple === Node.js